Build a Simple OpenAI App in Python

Build a Simple OpenAI App in Python

Looking to get started with AI and automation? Build a Simple OpenAI App in Python is your clear and practical guide to launching an intelligent chatbot using Python and OpenAI’s API. In just a few steps, beginners can go from writing their first line of code to having a working app powered by GPT-3.5 or GPT-4. This tutorial will walk you through setting up your environment, installing dependencies, and writing code that interacts with OpenAI to handle requests and process responses. You will build a fully functional chatbot using fewer than 50 lines of Python code.

Key Takeaways

- Set up your Python environment and generate your OpenAI API key

- Build a working chatbot with concise and readable Python code

- Learn how to handle responses and manage tokens efficiently

- Apply best practices to avoid excessive costs and hitting rate limits

What You Need Before You Start

This is an openai api python tutorial designed for beginners. If you are new to APIs or Python, ensure you have the following:

- Python installed (version 3.7 or higher). Download it from the official Python website.

- A code editor such as VS Code, PyCharm, or any lightweight text editor

- Basic use of command-line interface (Terminal or Command Prompt)

- An OpenAI account with an API key

Step-by-Step: Build Your First OpenAI Chatbot in Python

1. Set Up a Virtual Environment

To keep your project’s dependencies isolated, create a virtual environment:

python -m venv openai_app

cd openai_app

source bin/activate # On Windows: .\Scripts\activate2. Install Necessary Dependencies

Install the OpenAI Python client along with the dotenv package:

pip install openai python-dotenvThe dotenv package helps you store secrets, such as API keys, securely in a .env file.

3. Prepare Your API Key

Log in to your OpenAI dashboard, create an API key, and then store it in a .env file in your project:

OPENAI_API_KEY="your_api_key_here"Keep this file secure and never upload it to a public repository.

4. Write the Minimal Python Chatbot Script

Save the following code as chatbot.py. This script allows you to converse with an AI model directly from your terminal:

import os

import openai

from dotenv import load_dotenv

load_dotenv()

openai.api_key = os.getenv("OPENAI_API_KEY")

def ask_openai(prompt, model="gpt-3.5-turbo"):

try:

response = openai.ChatCompletion.create(

model=model,

messages=[{"role": "user", "content": prompt}]

)

answer = response['choices'][0]['message']['content']

return answer.strip()

except Exception as e:

return f"Error: {str(e)}"

while True:

user_input = input("You: ")

if user_input.lower() in ["exit", "quit"]:

break

reply = ask_openai(user_input)

print("Bot:", reply)5. Run Your Chatbot

Start chatting by executing the script in your terminal:

python chatbot.pyEnter queries or prompts, and the bot will respond. To stop the program, type exit or quit.

Understanding the OpenAI Response Format

The API returns a structured JSON object. Important parts include:

choices[0].message.content: This contains the model’s actual responseusage: Displays token statistics for that requestmodel: Indicates which model produced the response

Understanding this structure helps you optimize your prompts and manage token usage better. For a broader application of using AI to streamline repetitive work, see how GPT-4 and Python automate tasks efficiently.

OpenAI API Pricing, Rate Limits, and Token Management

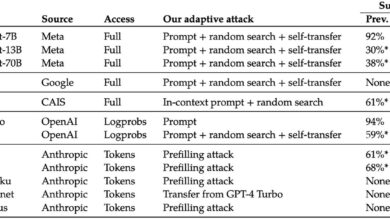

The cost of using OpenAI models depends on the number of tokens processed. Here is the general pricing:

- GPT-3.5-Turbo: ~$0.0015 per 1K input tokens, ~$0.002 per 1K output tokens

- GPT-4: ~$0.03 per 1K input tokens, ~$0.06 per 1K output tokens

When you create an account, you may receive free credits that allow limited usage at no charge. This is especially useful while learning or experimenting.

Smart Practices to Control API Costs

- Begin with shorter prompts and monitor how many tokens each call uses

- Set a monthly limit on your billing limits page

- Review API logs regularly to identify any excessive usage

- Use GPT-3.5-Turbo for cost-effective solutions and switch to GPT-4 only when required

Error Handling for Stability

Real-world applications must be prepared for network interruptions, timeouts, or errors. Here is a version of the function that improves reliability with better error messaging:

def ask_openai(prompt, model="gpt-3.5-turbo"):

try:

response = openai.ChatCompletion.create(

model=model,

messages=[{"role": "user", "content": prompt}],

timeout=10

)

return response['choices'][0]['message']['content'].strip()

except openai.error.RateLimitError:

return "Rate limit exceeded. Try again later."

except openai.error.AuthenticationError:

return "Invalid API key. Check your .env file."

except Exception as e:

return f"An error occurred: {str(e)}"GPT-3.5 vs GPT-4: Key Differences

| Feature | GPT-3.5-Turbo | GPT-4 |

|---|---|---|

| Speed | Faster response time | Slower, more accurate |

| Cost | More affordable for large usage | Higher token price |

| Token Limit | Up to 16,385 tokens | Up to 128,000 tokens |

| Reasoning Power | Suitable for light conversations | Better at reasoning and depth |

Downloadable Source Code

Access the complete chatbot project here: OpenAI Simple Chatbot on GitHub.

For a visual guide through the process, check out this YouTube walkthrough video.